Optimizing Exchange 2007 Servers

With the separation of

various roles in Exchange 2007, individual optimimizations vary from

role to role. The following sections address the various roles in

Exchange 2007 and how to optimize the performance of those roles.

Optimizing Mailbox Servers

Of all the servers in

an Exchange 2007 environment, the Mailbox server role is the one that

will likely benefit the most from careful performance tuning.

Mailbox servers

have traditionally been very dependent on the disk subsystem for their

performance. Although this has changed in Exchange 2007, it is important

to understand that this change in disk behavior is very dependent on

memory. As such, the general rule for performance on an Exchange 2007

Mailbox server is to configure it with as much memory as you can. For

example, in Exchange 2003, if you had a load of 2,000 users that

generated an average of 1 disk I/O per second and you were running a

RAID 0+1 configuration, you would need 4GB of memory and 40 disks

(assuming 10k RPM disk and 100 random disk I/O per disk) to get the

performance you’d expect out of an Exchange server. In Exchange 2007,

you could reduce the number of disks required by roughly 70% if you

increased the system memory to 12GB of memory.

As

you can see, with a Mailbox server, the trick is to balance costs

against performance. In large implementations, it is less expensive to

replace high-performance disks with memory. This makes direct attached

disks a viable choice for Exchange 2007 Mailbox servers.

Another area where a

Mailbox server benefits in terms of performance is the disk subsystem.

Although you’ve just seen that the disk requirements are lower than

previous versions of Exchange, this doesn’t mean that the disk subsystem

is unimportant. This is another area where you must create a careful

balance between cost, performance, and recoverability. The databases

benefit the most from a high-performance disk configuration. Consider

using 15k RPM drives because they offer more I/O performance per disk;

generally 50% more random I/O capacity versus a 10k RPM disk. Given the

reduction in disk needed to support the databases, you should consider

using RAID 0+1 rather then RAID 5 so as not to incur the write penalties

associated with RAID 5. The log files also need fast disks to be able

to commit information quickly, but they have the advantage of being

sequential I/O rather than random. That is, the write heads don’t have

to jump all around the disk to find the object to which they want to

write. The logs start at one point on the disk and they write

sequentially without having to modify old logs.

In a perfect world, the

databases and logs are all on their own dedicated disks. Although this

isn’t always possible, it does offer the best performance. In the real

world, you might have to occasionally double up databases or log files

onto the same disk. Be aware of how this affects recoverability. For

performance, always separate the logs from the databases as their

read/write patterns are very different. It also makes recovery of a lost

database much easier.

Mailbox servers also

deal with a large amount of network traffic. Email messages are often

fairly small and as a result, the transmission of these messages isn’t

always as efficient as it could be. Whenever possible, equip your

Mailbox servers with Gigabit Ethernet interfaces. If possible, and if

you aren’t clustering the Mailbox servers, try to run your network

interfaces in a teamed mode. This improves both performance and

reliability.

As Mailbox servers also

hold the public folder stores, consider running a dedicated public

folder server if your environment heavily leverages public folders.

Public folder servers often store very large files that users are

accessing, so separating the load of those large files from the Mailbox

servers results in better overall performance for the user community.

For companies that

only lightly use public folders, it requires some investigation of the

environment to see if it is better to run a centralized public folder

server or if it is better to maintain replicas of public folders in

multiple locations. This is usually a question of wide area network

(WAN) bandwidth versus usage patterns.

Optimizing Mailbox Clusters

Mailbox servers in

Exchange 2007 offer a new function known as Cluster Continous

Replication. Mailbox servers clustered in this way can be optimized in

much the same way as standalone Mailbox servers. One of the key

differences is that network interfaces should

not be teamed on a Windows cluster. This is because the small amount of

latency introduced in the load-balancing algorithm of the teaming can

cause the cluster to believe that a node is not available and trigger an

unnecessary failover of resources.

Mailbox clusters in

Exchange 2007 should always be configured with multiple network

interface cards (NICs) and a NIC should be dedicated to cluster traffic

only. This helps ensure that the cluster heartbeat is always working

properly. In the case of “local clusters,” you should always use a hub

between the heartbeat NICs, so that link state is not lost if you reboot

a system.

On a mailbox cluster, it is

very important that the log files be placed on a fast disk subsystem.

This is because in addition to storing transactions for the database,

the logs are also shipped to the remote node for reprocessing. This is

how the two nodes maintain similar information so that a failover can be

accomplished without the need for shared storage.

Optimizing Client Access Servers

Client Access servers (CASs)

tend to be more dependent on CPU and memory than they are on disk.

Because their job is to simply proxy requests back to the Mailbox

servers, they don’t need much in the way of local storage. The best way

to optimize the Client Access server is to give it enough memory that it

doesn’t need to page very often. By monitoring the page rate in the

Performance Monitor, you can ensure that the CAS is running optimally.

If it starts to page excessively, you can simply add more memory to it.

Similarly, if the CPU utilization is sustained above 65% or so, it might

be time to think about more processing power.

Unlike Mailbox

servers, Client Access servers are usually “commodity” class servers.

This means they aren’t likely to have the capacity for memory or CPU

that a Mailbox server might have. It is typical to increase the

performance of the Client Access servers by simply adding more servers

into a load-balanced group.

This is a good example of

optimizing a role as opposed to a server that holds a role. As you start

to add more services to your Client Access servers, such as Outlook

Anywhere or ActiveSync, you will see an increase in CPU usage. Be sure

to monitor this load because it allows you to predict when to add

capacity to account for the increased load. This prevents your users

from experiencing periods of poor performance.

Optimizing Hub Transport Servers

The goal of the Hub

Transport server is to transfer data between different Exchange sites.

Each site must have a Hub Transport server to communicate with the other

sites. Because the Hub Transport server doesn’t store any data locally,

its performance is based on how quickly it can determine where to send a

message and send it off. The best way to optimize the Hub Transport

role is via memory, CPU, and network throughput. The Hub Transport

server needs ready access to a global catalog server to determine where

to route messages based on the recipients of the messages. Placing a

global catalog (GC) in the same site as a busy Hub Transport server is a

good idea. Ensure that the Hub Transport server has sufficient memory

to quickly move messages into and out of queues. Monitoring

available memory and page rate gives you an idea if you have enough

memory. High-speed network connectivity is also very useful for this

role. If you are running a dedicated Hub Transport server in a site and

you find that it’s overworked even though it has a fast processor and

plenty of memory, consider simply adding a second Hub Transport server

to the site because they automatically share the load.

Optimizing Edge Transport Servers

The Edge Transport server

is very similar to the Hub Transport server, with the key difference

being that it is the connection point to external systems. As such, it

has a higher need for processing power because it needs to convert the

format of messages from Simple Mail Transfer Protocol (SMTP) to

Messaging Application Programming Interface (MAPI) for internal routing.

Edge Transport servers are often serving “double duty” as antivirus and

antispam gateways, thus increasing the need for CPU and memory. The

Edge Transport role is one where it is very common to optimize the

service by deploying multiple Edge Transport servers. This not only

increases a site’s capacity for sending mail into and out of the local

environment, but it also adds a layer of redundancy.

To fully

optimize this role, consider running Edge Transport servers in two

geographically disparate locations. Utilize multiple MX records to

balance out the load of mail coming into the company. Use your route

costs to control the outward flow of mail such that you can reduce the

number of hops needed for mail to leave the environment.

Keep a close eye on CPU

utilization as well as memory paging to know when you need to add

capacity to this role. Utilizing content-based rules or running message

filtering increases the CPU and memory requirements of this role.

Optimizing Unified Messaging Servers

The Unified Messaging

server is a new role in the Exchange world. In the past, this type of

functionality was always performed by a third-party application. In

Exchange 2007, this ability to integrate with phone systems and voice

mail systems in built in. As you might expect, to optimize this role,

you must optimize the ability to quickly transfer information from one

source to another. This means that the Unified Messaging role needs to

focus on sufficient memory, CPU, and network bandwidth. To fully

optimize Unified Messaging services, strongly consider running multiple

network interfaces in the Unified Messaging server. This allows one

network to talk to the phone systems and the other to talk to the other

Exchange servers. Careful monitoring of memory paging, CPU utilization,

and NIC utilization allows you to quickly spot any bottlenecks in your

particular environment.

General Optimizations

Certain bits of advice

can be applied to optimizing any server in an Exchange 2007 environment.

For example, the elimination of unneeded services is one of the easiest

ways to free up CPU, memory, and disk resources. Event logging should

be limited to the events you care about and you should be very careful

about running third-party agents on your Exchange 2007 servers.

Event

logs should be reviewed regularly to look for signs of any problems.

Disks that are generating errors should be replaced and problems that

appear in the operating system should be addressed immediately.

Optimizing Active Directory from an Exchange Perspective

As you likely already

know, Exchange 2007 is very dependent on Active Directory for routing

messages between servers and for allowing end users to find each other

and to send each other mail. The architecture of Active Directory can

have a large impact on how Exchange performs its various functions.

When designing

your Exchange 2007 environment, consider placing dedicated global

catalog servers into an Active Directory site that contains only the GCs

and the local Exchange servers. Configure your site connectors in AD

with a high enough cost that the GCs in this site won’t adopt another

nearby site that doesn’t have GCs. This ensures that the GCs are only

used by the Exchange servers. This can greatly improve the lookup

performance of the Exchange server and greatly benefits your OWA users

as well.

In the case of a very large

Active Directory environment, for example 20,000 or more objects,

consider upgrading the domain controllers to run Windows Server 2003

64-bit. This is because a directory this large can grow to be larger

than 3GB. When the Extensible Storage Engine database that holds Active

Directory grows to this size, it is no longer able to cache the entire

directory. This increases lookup and response times for finding objects

in Active Directory. By running a 64-bit operating system on the domain

controller, you can utilize the larger memory space to cache the entire

directory. The nice thing in this situation is that you retain

compatibility with 32-bit domain controllers, so it is not necessary to

upgrade the entire environment, only sites that will benefit from it.

Monitoring Exchange Server 2007

A variety of built-in

Microsoft tools are available to help an administrator establish the

baseline of the Exchange Server 2007 environment. Among these, the

Performance Monitor Microsoft Management Console (MMC) snap-in is one of

the most common tools used to measure the capacity requirements of

Exchange Server 2007. This MMC tool is built in to Windows Server 2003.

Using the Performance Monitor Console

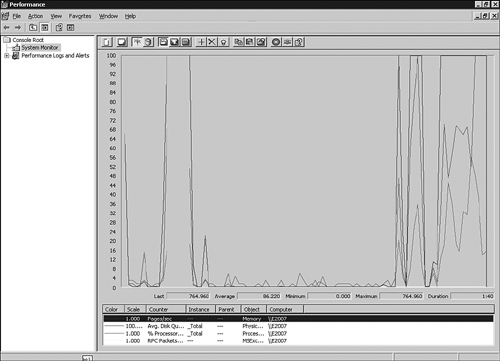

The Performance snap-in

enables an in-depth analysis of every measurable aspect of the Exchange

server. The information that is gathered using the Performance snap-in

can be presented in a variety of forms, including reports, real-time

charts, or logs, which add to the versatility of this tool. The

resulting output formats enable an administrator to present a baseline analysis in real time or through historical data. The Performance snap-in, shown in Figure 1, can be launched from the Start, Administrative Tools menu.

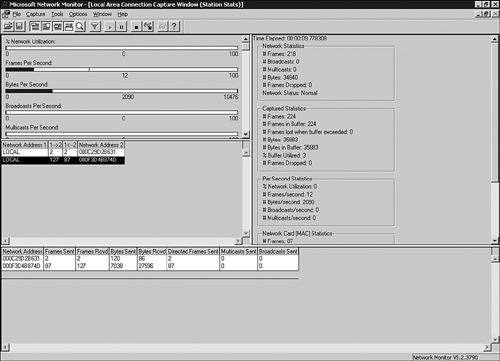

Using Network Monitor

The Network Monitor, as illustrated in Figure 2,

is a reliable capacity-analysis tool used specifically to monitor

network traffic. Two flavors of the Network Monitor are available: one

that is built in to Windows Server 2003 and one that is provided in

Systems Management Server (SMS). The one included with Windows Server

2003 is a more downscaled version. It is capable of monitoring network

traffic to and from the local server on which it runs. The SMS version

monitors network traffic coming to or from any computer on the network

and enables you to monitor network traffic from a centralized machine.

This facilitates gathering capacity-analysis data, but it is also

important to note that it could present possible security risks because

of its ability to promiscuously monitor traffic throughout the network.

The one built in to Windows

can be installed via the Add/Remove Programs interface and is

accessible via the Administrative Tools.

There are also

third-party network monitoring tools, such as Ethereal, that are very

useful for monitoring network performance because they pick up things

such as excessive retransmits between hosts, CRC errors, or odd protocol

transmissions that could affect the performance of Exchange 2007

servers.

Using Task Manager

Task Manager displays

real-time performance metrics, so an administrator can quickly get an

overall idea of how the Exchange 2007 server is performing at any given

time. Its biggest downfall, however, is that it does not store any

historical data, so it not a suitable tool for capacity-analysis

purposes. Task Manager is typically used as a quick check to see if

anything is out of the ordinary. If a server appears to be running slow,

using Task Manager and using the Processes tab allows you to sort the

processes by CPU or Memory use and quickly see if something is

noticeably different from its baseline value. This is a quick way to

spot common issues like an antivirus scanner taking up all the CPU time

or an lsass.exe process using an excessive amount of memory.